Approvals

Overview

In autobotAI, Approvals allow for a checkpoint within bot execution flows, where specific tasks—such as remediations, deletions, or changes to resources—can be approved or rejected before they are executed. This feature provides an additional layer of control, ensuring that sensitive actions are authorized by designated users.

How Approvals Work

Approvals are automatically created whenever a bot flow includes an Approval Node. During execution, when the bot reaches an Approval Node, it pauses and sends an approval request to the designated approver(s) specified within the node. This approval request can be sent via various channels, such as AWS SES,Google Chat, Slack, Microsoft Teams and more.

The approver receives a message that links them to autobotAI’s Approvals section, where they can review and authorize or reject the action.

Accessing Approvals in autobotAI

-

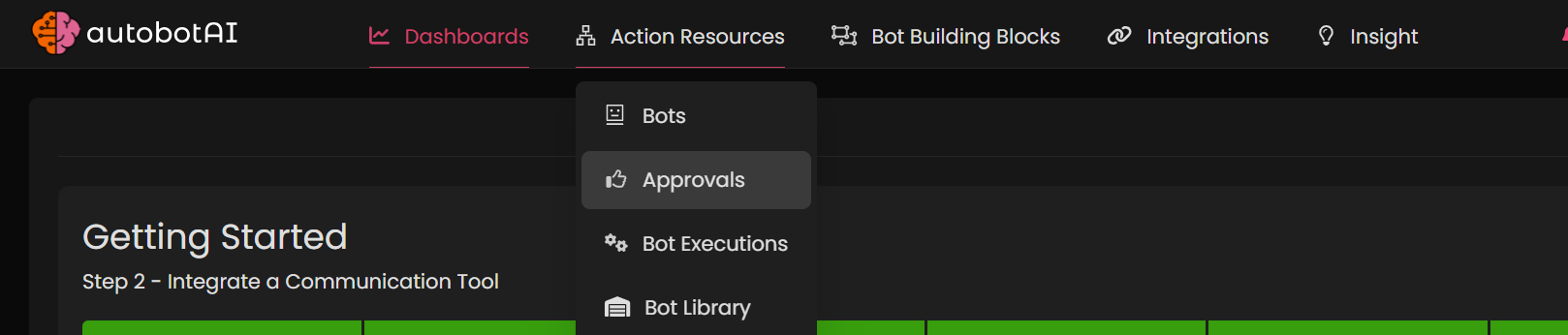

Navigate to the Approvals Section:

- In the autobotAI dashboard, go to Action Resources in the main menu.

- Select Approvals from the dropdown. This will display a list of bots pending approval.

-

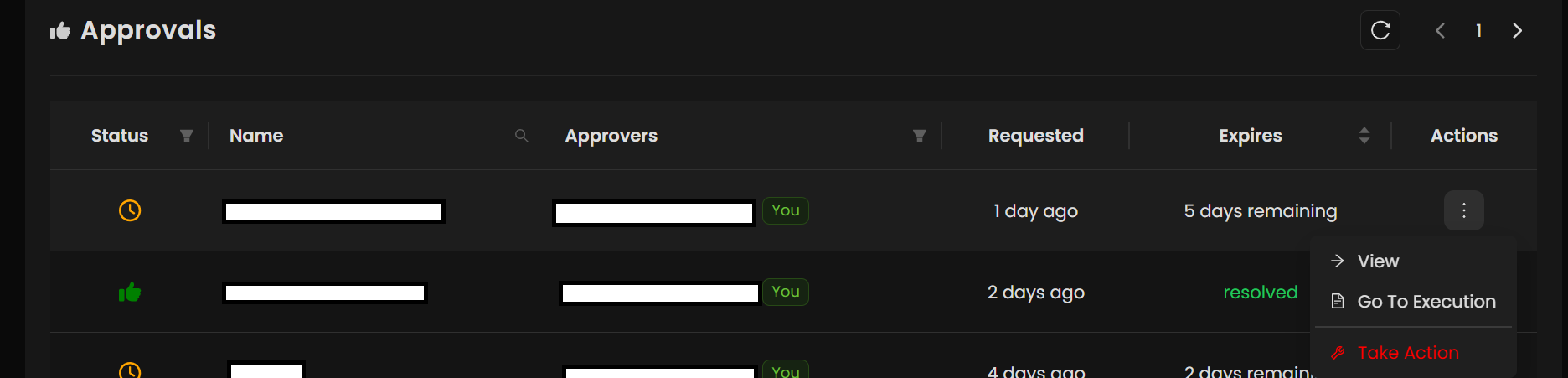

Review Pending Approvals:

- In the Approvals section, you will see a list of all bots currently awaiting approval.

- Locate the bot that requires your authorization.

-

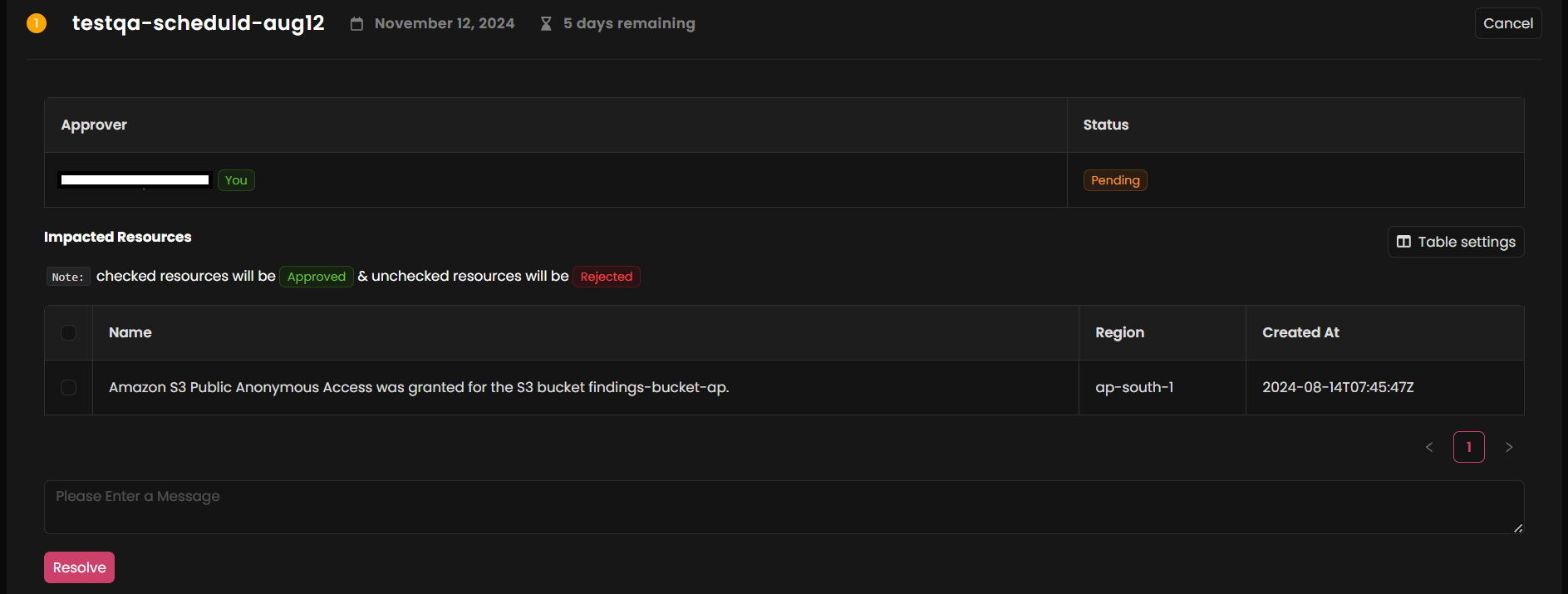

Approve or Reject an Action:

- Next to each pending approval, click on the three dots in the Action column.

- Select Take Action from the options (e.g., View, Go to Action, Take Action).

- In the approval modal, review the details and choose to approve or reject the action by checking the relevant box.

- Type Accept to approve or Reject to deny, and then click Resolve.

Once you complete the approval, the bot flow will either proceed with execution.

Important Note

- The bot flow will only continue if the approval action is completed. If not after certain amount time mentioned in the approval node the flow will stop at the approval stage.

Using approvals helps ensure that critical changes within your integrations are monitored and authorized by the right personnel, enhancing security and compliance in your autobotAI environment.

Responsible AI: Human Oversight & Accountability

Approval workflows in autobotAI ensure humans remain in control of critical decisions while maintaining a complete audit trail of every authorization.

Why Human Approval Matters

In security and compliance operations, the cost of wrong decisions is significant:

- Blocking a legitimate user = lost productivity and frustrated team members

- Missing a real threat = security breach and data loss risk

- Applying wrong remediation = unintended consequences and system damage

autobotAI's principle: AI investigates and recommends. Humans decide.

How Approval Workflows Ensure Accountability

Approval workflows work through a structured process:

Step 1: Bot Completes Investigation

- Gathers relevant data from multiple sources

- Analyzes information against policies and rules

- Calculates risk level or compliance impact

- Determines recommended action

Step 2: Approval Request is Created

- Contextual summary is generated for the approver

- Key risks and implications are highlighted

- Evidence supporting the recommendation is included

- Multiple action options are presented

Step 3: Human Reviews and Decides

- Approver receives notification with full context in notification channels (e.g. Code repository pull request, Slack, Microsoft teams, JIRA ticket etc.)

- Approver can review complete execution logs.

- Approver can see historical similar cases and how they were handled.

- Approver makes informed decision: approve, reject, or customize response instruction for agentic workflow.

Step 4: Action is Executed and Recorded

- If approved: bot executes action, logs the approval with approver name, reason and timestamp.

- If rejected: bot stops further remediation and response, updates risk register, logs the recommendation for review and improvement.

- If customized: bot executes modified action with approver's customizations noted.

- All decisions logged with full accountability trail.

Example: Security Incident Response Approval

Scenario: Suspicious data access detected

What the bot investigated:

- User login patterns (unusual after-hours access from new location detected in Threat detection security tool)

- File access patterns (large bulk download of sensitive data from DLP solution detection)

- Threat intelligence correlation with threat intel integrations (pattern matches known attack groups)

- Risk calculation: CRITICAL (confidence: 92%, assumption score: 7%)

- Recommended action: Immediately reduce permission levels of user account and disable access key for programatic access.

Approval request received by: Security Manager and/or identity owner (security.manager@company.com, john'sreportingmanager@company.com)

Context provided to approver:

⚠️ CRITICAL INCIDENT: Potential unauthorized data access

Affected User: john.smith@company.com

Risk Level: CRITICAL (confidence: 92%)

Evidence Summary:

• Unusual login: 2:45 AM from new geographic location (China)

• File access: Downloaded 47 GB of customer data in 8 minutes

• Threat intelligence: Pattern matches known APT group tactics

• Internal data: User has no history of after-hours access

AI Recommended action: Disable user account immediately

Alternative actions available:

• Force password reset only (lower impact)

• Revoke data access but keep account active (investigate longer)

• Investigate further (delay action 1 hour while team digs deeper)

Decision needed from: Security Manager and John's reporting manager

Timeline: Action will execute within 2 minutes if approved

How the approver responded:

- Reviewed the execution log showing evidence

- Attempted to call user (no answer)

- Checked with user's manager (confirmed user is on vacation in US)

- Decision: Approved immediate account disable

What was logged:

Approval: APPROVED

Approver: security.manager@company.com

Approval timestamp: 2025-11-16 14:45:23 UTC

Approver notes: "User confirmed on vacation; unauthorized access confirmed"

Action executed: Account disabled for john.smith@company.com

Execution timestamp: 2025-11-16 14:45:31 UTC

Notifications sent: user account, IT team, Security team, CISO

Incident case created: INC-2025-00847

Audit logged: Yes, complete trail available

Why This Approach is Responsible AI

Human Oversight: No critical decision is made by AI alone. Humans review, decide, and take responsibility.

Accountability: Clear record showing who approved what, when, and why—enabling audits and compliance validation.

Flexibility: Approvers can customize responses based on their knowledge and context, not just accept/reject.

Continuous Learning: AI learns from approval/rejection patterns to improve future recommendations.

Responsibility: Organizations can prove they had human judgment in the loop for all critical actions.

Configuring Approval Workflows

When you create a bot with approvals, configure:

Approval Node Configuration:

Trigger event: When bot reaches this node as deterministic process.

Risk level: Which actions require approval (CRITICAL, HIGH, MEDIUM)

Approver group: Who gets the approval request (security_managers, resource_owner, Team_lead)

Notification channels: Email, Slack, Microsoft Teams, code repo, ticketing tool etc.

Approval timeout: How long before escalation (1 hour, 4 hours, 24 hours)

Escalation path: Who to escalate to if approval times out

Context to include: What information approver sees before deciding

Allowed actions: Approve, Reject, Customize, Request more info

Best Practice: Lower thresholds for critical actions

- CRITICAL risk → requires approval immediately

- HIGH risk → requires approval, but 1-hour timeout

- MEDIUM risk → may auto-approve with logging if from trusted source

- LOW risk → log and auto-approve, escalate anomalies

Best Practices for Approvers

When reviewing approval requests:

- Review the evidence - Don't just skim the summary, look at the execution logs

- Ask "why" - Understand the reasoning before approving

- Consider context - Is this normal? Are there special circumstances?

- Document your decision - Your notes are valuable for audits and learning

- Escalate uncertainty - If unsure, request more information rather than guessing

- Provide feedback - Tell the security team if recommendations are consistently right or wrong

Approval Metrics to Monitor

Track these metrics to ensure approval processes are working:

- Approval rate: % of recommendations that are approved (should be 80%+)

- Rejection rate: % of recommendations that are rejected (investigate spikes)

- Override rate: % of approvals that deviate from recommendation

- Average approval time: How long until decision is made (should be <15 min)

- Timeout escalations: % of approvals that timeout and escalate (should be <5%)

What each metric tells you:

- High approvals (80%+) = AI recommendations are good, trust building

- Sudden increase in rejections = Check if rules changed or something unusual is happening

- High override rate = Approvers know better context than AI; update recommendations

- Slow approvals = Maybe need more context, or staffing issue

- Timeouts = Escalation policy might be too aggressive